People are increasingly relying on AI sources for health information, but is it safe to do so?

Worldwide, 1 in 4 queries to ChatGPT are health related, while almost 1 in 10 Australians reported that they had asked ChatGPT about their health1.

However, independent studies consistently show that AI tools give unsafe health advice. One study found that ChatGPT Health failed to recommend emergency care when it was needed in over 50% of cases. At the same time, it did suggest emergency care to 64% of people who had a non-urgent problem2.

Recently, Google removed several AI Overviews on health topics after a Guardian investigation found the advice about blood tests results was dangerous and misleading.

Clearly, AI tools don’t merit the level of trust that people are placing in them.

The Sydney Health Literacy Lab has developed an education resource for using AI more safely. It suggests only asking AI questions when there is a straightforward factual answer with credible information readily available, preferably while also checking other sources.

They point out that ChatGPT will:

- Sound authoritative and confident, even when it is wrong

- Make up references or

- Provide real references which do not actually match the information given by the AI3.

In Australia there are a number of high quality, credible health resources available online that provide a safer alternative to AI tools. These include healthdirect and the Better Health Channel (both government funded) as well as the healthdirect phoneline which allows people to speak to a nurse about their concern. There are also many helplines that provide support and information about specific health conditions. These resources are listed below.

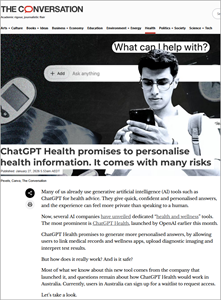

ChatGPT Health promises to personalise health information. It comes with many risks.

Melbourne, Sydney, The Conversation, 2026

ChatGPT Health allows users to link medical records to chat. But it hasn’t been independently tested and will make mistakes.

Sydney, The Guardian, 2026.

ChatGPT Health regularly misses the need for medical urgent care and frequently fails to detect suicidal ideation, a study of the AI platform has found, which experts worry could “feasibly lead to unnecessary harm and death”.

What’s the best way to use ChatGPT for health questions?

Sydney, Sydney Health Literacy Lab, 2025.

Educational resource to assist people to assess what types of health queries can be safely answered by ChatGPT.

Canberra, Healthdirect Australia, 2026.

Comprehensive health information website. Includes symptom checker, information on health conditions and medicines and a directory of services.

Melbourne, Victorian Department of Health & Human Services, 2026.

Health information website, includes information on medical conditions and health and wellbeing.

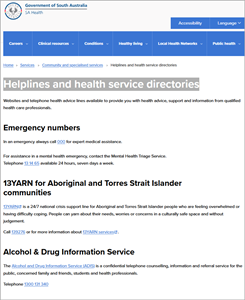

Helplines and health service directories

Adelaide, SA Health, 2026.

Online and telephone health advice lines available to provide you with expert health advice, support and information.

To see more resources on this topic, as well as other items added to the catalogue last month, visit our library homepage. Please contact us for more information or assistance in accessing resources.

- https://theconversation.com/chatgpt-health-promises-to-personalise-health-information-it-comes-with-many-risks-273699 ↩︎

- https://www.theguardian.com/technology/2026/feb/26/chatgpt-health-fails-recognise-medical-emergencies ↩︎

- https://www.sydneyhealthliteracylab.org.au/onlinehealthlit-chatgpt ↩︎

Posted 29 April 2026

More from:

Enjoyed this article? Subscribe to be notified whenever we publish new stories.

Subscribe for Updates